Probabilistic programming and data assimilation for next generation city simulation

The field of social simulation is dominated by Agent-Based Models (ABMs). Individual ‘agents’ are given simple rules, and the complex phenomena they produce downstream, termed emergence, can then be studied in detail. However, a major limitation of ABMs is that they can only be calibrated once on historical data, meaning simulations rapidly diverge from reality or the true state. A solution to this would be to update the model with real-time data, following a process well studied in numerical weather prediction called Dynamic Data Assimilation (DDA).

Data Assimilation techniques combine the output of a predictive model with noisy real-world observations to produce a more accurate model state.

Project aims

Whilst the application of DDA is typical in fields like weather prediction and signal processing there is very little work doing similar with ABMs.

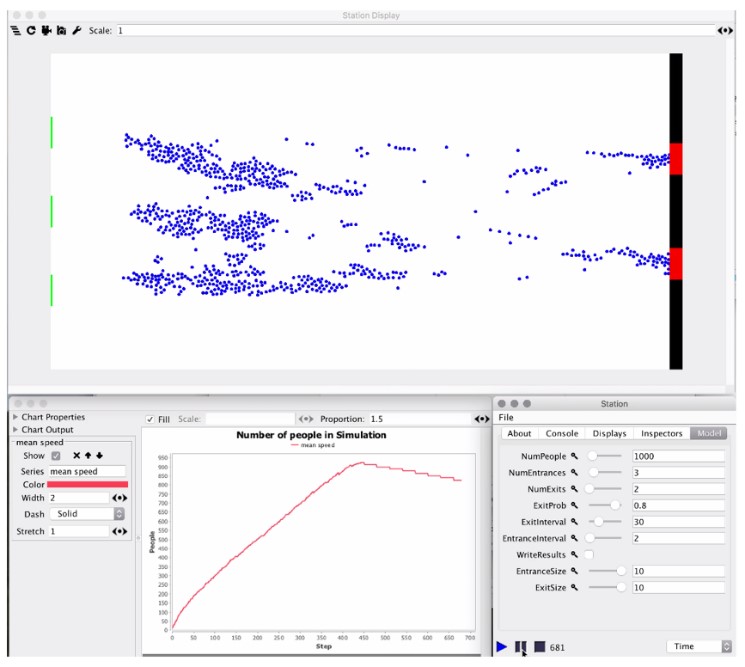

This research attempts to use Keanu – a Probabilistic Programming Library (PPL) developed by Improbable Worlds Ltd. – as a framework for data assimilation on a spatial ABM called StationSim (figure 1) created using the MASON framework.

StationSim is meant to be a simplified representation of passengers exiting a train and moving towards a specified exit.

Explaining the science

ABMs are restricted to a one-shot approach to calibration, using historical data. Whilst these models might act very similarly to the real-world phenomena they mimic, they diverge from the true state (the actual position and movement of all real-world agents) almost immediately.

In DDA, statistical techniques are used to combine a predictive model with observations of the model state, accounting for the level of uncertainty in both. It is at heart a Bayesian process; we combine a prior (model prediction) with a likelihood (observations) to derive a more accurate posterior (assimilated model).

Whereas there are a number of well-defined DDA techniques, we saw an opportunity to investigate a new method. Probabilistic programming is the marriage of programming languages and statistical theory, that allows for complex statistical models to be defined and evaluated in hours instead of days or weeks by hand. The key task for a PPL is statistical inference, which in turn usually requires a data structure that can accurately represent a probability distribution.

Keanu relies on what is called a computational graph to carry out inference, which it calls a Bayesian Network. This network represents the conditional dependency relationships between all vertices, probabilistic and non-probabilistic. In this graph, Keanu can ‘observe’ (set) the value of certain vertices using observations. Keanu can then calculate the most likely value of the parent and child vertices, causing a cascade of calculations until it has calculated the most likely state of the entire network. The Bayesian Network here is our prior prediction, and after applying observations, our posterior is the updated model state.

Figure 1. StationSim. This image shows the AMB we used to develop the DA algorithm. Small circular agents (blue) start an entrance on the left (in green), moving towards one of the two exits on the left hand side (red).

Results

This research proved incredibly challenging; both Keanu and MASON are complex libraries for very different reasons. MASON attempts to handle the complicated parts of ABM behind the scenes hidden from the user, whereas Keanu is in a pre-Alpha release stage with limited documentation.

Using a digital twin, the first twin producing data and the second twin attempting to assimilate it, we have managed to produce a functional data assimilation algorithm with Keanu. However, the algorithm is not yet finished and requires some improvement to be useful. The algorithm shows a clear transformation of the state between assimilation windows when observing data but needs to be more accurate to apply to a real-world system.

Applications

There are many tangible applications to this research; an assimilated spatial ABM would prove useful wherever real-time knowledge of people’s position and movement are valued. For example, ABMs have been used to improve evacuation and disaster planning. Knowing the exact position of people in a coastal town during a tsunami warning would mean better traffic management and more efficient use of shelters.

Research Team

Luke Archer, Prof. Nick Malleson, Dr. Jon Ward – University of Leeds

Funders / Partners

This project was partly funded by Improbable Worlds Ltd. and makes extensive use of their probabilistic programming library Keanu.